医学影像的阅片人疲劳往往会导致医疗事故的发生。在英国NHS 急诊医院每年统计的11000 逾例可预防医院死亡病例中,有超过 8000 例病例的死亡为诊断失误造成。

医学影像的解读非常有挑战性,因为这项工作不但具有重复性,而且出现错误(假阴性)的情况也相对常见。由于需要持续和长期在电脑屏幕前审阅医学影像,放射科医生也会因此出现视觉疲劳。研究发现,与每天刚上班时的情况相比,放射科医生在下班时出现影像阅片错误的概率更高。

盲法独立中央审查(BICR) 是 FDA 推荐的肿瘤学临床试验注册方法之一(1)。在肿瘤学临床试验中,对涉及放射学影像的临床指标进行客观评价是一项重要终点因素。在 BICR 期间,需要使用不同的模型对阅片人进行常规监测,以确保阅片质量。

"如果某位阅片人同时参与多个临床试验,则可将阅片人不一致指数作为早期指标,对阅片人的后续阅片质量和潜在疲劳度进行预估"。

- ManishSharma,医学博士,凯理斯医学影像副总裁

阅片人表现监测方法

监测阅片人表现不仅是监管的规定,更是在整个试验中实施主动干预的关键组成部分。监测不仅可确保实现更高质量的放射学评估,同时还能够强化对特定临床试验方案的执行。BICR过程中始终存在内在的可变性,这是由评审员不同的背景、培训和人性差异所致。尽管固有的可变性客观存在,但如何采取有效方式来跟踪和主动提高阅片人的表现仍是需要解决的问题。

"双重阅片加仲裁"是各类肿瘤学临床试验审查中常用的模式之一,可实现比单一阅片模式更优的放射学评估结果。如果监测得当,经过评审的双重阅片可提升BICR 过程的周密性和可靠性,同时避免可变性甚至轻微错误对研究结果造成影响。

在跟踪阅片人表现时,仲裁率(AR) 是其中的一项评估指标,但该比率并不能准确认定阅片人表现中的问题。仲裁率会受到仲裁触发因素、终点、适应症、肿瘤负担等多个外部变量的影响。仲裁一致率(AAR) 是一项相对的表现评估指标,对于某位特定的阅片人而言,仲裁一致率越高,说明阅片人的阅片表现越好。

使用阅片人不一致指数评估阅片人的疲劳度

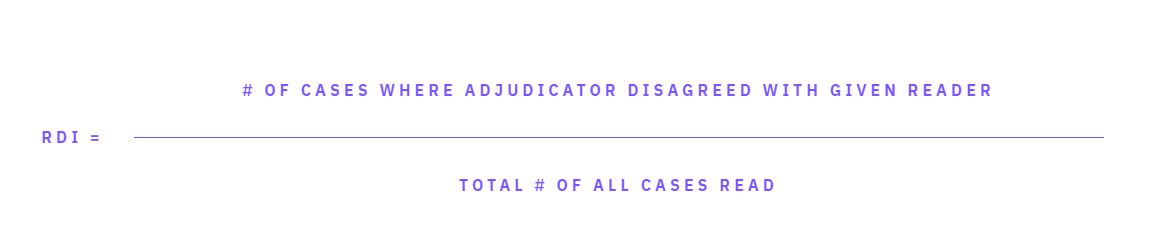

在之前的博客文章中,我们介绍了如何使用阅片人不一致指数(RDI)来评估仲裁人与首要阅片人审阅结果不一致的病例,并将仲裁人认为不一致病例数量与阅片总病例数进行比较。RDI既包含了一项研究的整体 AR,也涵盖了每位阅片人的个人 AAR。RDI 指的是某仲裁人不认可的病例结果在所阅片病例总数中的百分比。如下式定义,RDI 值低表示阅片人表现较好,反之则意味着阅片人表现欠佳。

RDI =

# 评审员与特定阅片人存在不一致的病例数量

所有病例阅片总数

毫不奇怪,阅片人所参与研究的数量与病例在所有研究中的分布情况之间可能存在关联。如果病例分布在太多的研究中,这会成为导致阅片人疲劳的主要诱因,例如,阅片人在2 个小时内为10个不同的试验阅片。理想的情况是对病例安排进行微妙平衡,让阅片人单次阅片中查看的大多数病例都来自于同一研究。

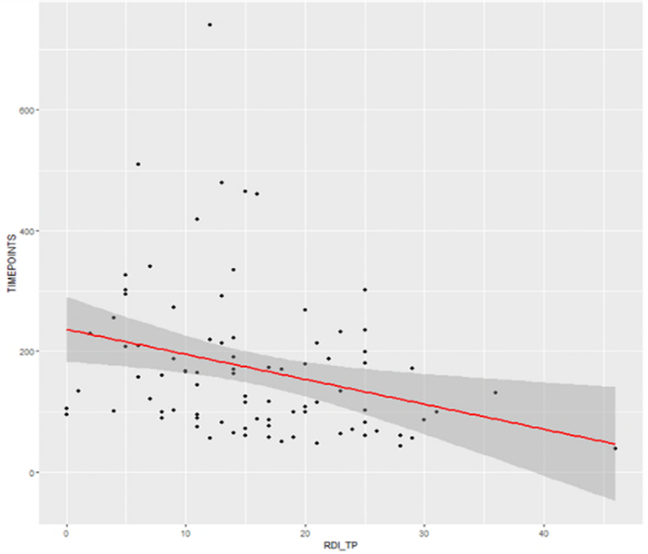

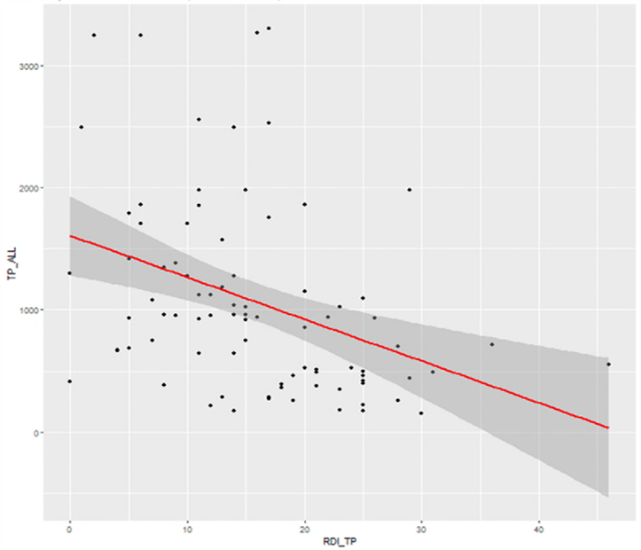

如下图所示,时间点的减少(Y 轴)与 RDI 的增加(X 轴)存在微弱相关性。通过监测每周或每次阅片的各项研究的RDI 比例,以及它与整体和其他研究RDI 的相互关系,RDI 可用于对趋势进行早期洞察。

参考资料

1. Guidance for Industry Developing Medical Imaging Drug and Biologic Products. Part 3: Design, Analysis, and Interpretation of Clinical Studies. US Department of Health and Human Services. Food and Drug Administration. Center for Drug Evaluation and Research. Center for Biologics Evaluation and Research; 2004.

2. Clinical Trial Imaging Endpoints Process Standards Guidance for Industry Draft. US Department of Health and Human Services. Food and Drug Administration. Center for Drug Evaluation and Research. Center for Biologics Evaluation and Research. March 2015 Revision1.

3. Manish Sharma, Madhuri Madasu, Sree Sudha Kota, Surabhi Bajpai, Yibin Shao, Srinivas Pasupuleti, Michael O’Connor, “Using reader disagreement index as a tool for monitoring impact on read quality due to reader fatigue in central reviewers,” Proc. SPIE 12035, Medical Imaging 2022: Image Perception, Observer Performance, and Technology Assessment, 120350J (4 April 2022); doi: 10.1117/12.2613082